|

When I tried to enter that into the IFE the form began to act squirrely after the eighth field and wouldn’t let me add anything else. After experimenting with the IFE, I found that Splunk was expecting a regular expression that looked something like: ^ (?P +)(?:* )(?P +) The documentation on doing this using the Interactive Field Extractor is pretty sparse (read: non-existent). The better way is to create a long regular expression that can extract all of the fields that we’re interested at once. (The date is parsed automatically by Splunk, so we’ll leave that one alone). The long way to get those fields would be to write thirteen individual field extractions, one for each field. The xferlog format consists of fourteen fields, all of which we may be interested in searching on at some point. The actual examples would be all one line without the ‘’ characters.) (I’ve added linebreaks throughout this post to make it more readable. Extracting xferlog fieldsĪs an example, let’s look at an xferlog generated by ProFTP.

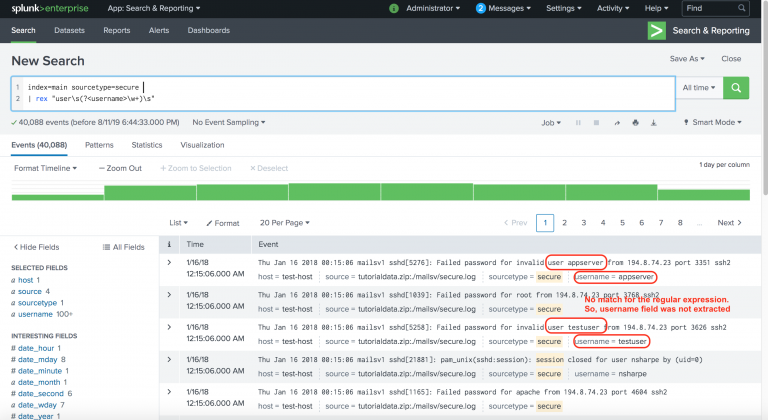

This allows you to parse an entire log message into its component fields using just one field extraction statement. One feature of field extractions that I just discovered is the ability to extract multiple fields from one field extraction. For a quick overview, check out the Splunk video on using the Interactive Field Extractor (IFE). If our custom logs contain a hostname and an error code, for example, field extractions let me pull those values from the logs and then write searches based on them. That’s where field extractions come in handy.įield extractions allow you to define a regular expression to run against log messages and extract out fields that you define. Our custom application logs, though, need a little massaging before we can put them to use.

Splunk does an excellent job of identifying the format of the data we ingest and automatically extracting fields for log types that it knows about. A project I’m on indexes absolutely every log that it generates into Splunk, from firewall logs to system logs to custom application logs. The rex statements in the example are fairly 'loose', but if you know your data, you can make them more specific as required.As a SysAdmin, one of the cooler tools that I’ve worked with is Splunk. (ignore _time in this example this is created by makeresults. You can also do some testing by using makeresults, eval & append to create your test data: | makeresults count=1

You could do: | rex "MSIAuth.*,(?SUCCESS|FAILURE)," So, far following regex provided me a table with TIME STAMP, MACADDRESS and USERNAME (like I mentioned above) : sourcetype="aaa-AuthAttempts" MSIAuth NOT TWCWiFi-Passpoint failure | rex "MSIAuth\,\d+\.\d+\.\d+\.\d+\,(?+)\,(?+)\,0\/0\/0\/\d+\,\w\d+\w+\d+\.\w+\.\w+\.\w+\,(?+)" | stats count by _time, MACADDRESS, USERNAMEĬan anyone please help to add columns in the table with SUCCESS, FAILURE and other fields based on the pattern of the raw data outlined above ? Such as :ĭon't feel like you have to do it all in one rex command. Here are the challenges I am facing when I want to to extract SUCCESS/FAILURE and cause fields :įor SUCCESS, I want to extract SUCCESS between 18th and 19th comma, and the services field between 19th and 20th comma.įor FAILURE, I want to extract FAILURE between 17th and 18th comma, and cause field between 19th and 20th comma. So far I was able to use following regular expression, and extracted USERNAME ( in this example "xxxyyy" is the username extracted from 5th and 6th comma), MACADDRESS (in this example "54-26-96-1B-54-BC" extracted between 8th and 9th comma). I want to create some select fields and stats them in to a table. The raw data below have two results, FAILURE and SUCCESS. Need help to extract fields between comma (,).

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed